Neuroscience is a humbling enterprise. As a wise person once said, if the human brain were so simple that we could understand it, we would be so simple that we couldn’t. But still, we make progress, with implications for medicine, artificial intelligence, education, and philosophy. So, what is the future of the study of the brain, and what is the future of the brain itself?

In November, about 30 neuroscientists, entrepreneurs, and computer scientists (and a former head of NASA) in a two-day discussion about the future of the brain. The summit was an iteration of the Future Trends Forum, organized by the nonprofit Foundation Innovation Bankinter.

The Spanish science writer Pere Estupinya and I were asked to offer commentary during the event. Below is a version of the synthesis (and provocation) I presented.

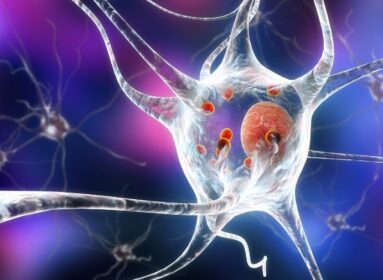

The discussion followed several themes. Presenters highlighted ways that computer science is helping neuroscience. Alex Fornito, a cognitive neuroscientist at Monash University, in Australia, scans brains using diffusion MRI, which tracks water molecules to trace anatomical connectivity. He then uses algorithms to segment the brain into different regions, forming a network of nodes and the links between them. Using network analysis, he can identify the dysfunctions of ADHD, schizophrenic, and Alzheimer’s disease.

Sean Hill, a neuroscientist at the University of Toronto, scans microcircuits in mouse brains and creates digital reconstructions, allowing him to model network oscillations. He also uses high-resolution scans of entire mouse brains to predict the locations of synapses across the brain. Other researchers talked about using computers to interpret signals from EEG electrodes, body sensors, and brain implants.

Several people talked about going the other way, using neuroscience to improve IA. Even a child can solve problems a supercomputer can’t while expending much less energy. Can we reverse engineer some of biology’s tricks?

Fornito talked about tradeoffs between time, space, and material. Some network modules conserve space and material by placing neurons near each other, while hubs that integrate information use thick, fast connections that conserve time at the expense of space and material. Antonio Damasio, a neuroscientist at the University of Southern California, impressed on us the abilities of organisms that don’t even have a central nervous system. Merely maintaining homeostasis is a feat. Speaking of robots, he said that if we gave them soft bodies and made them vulnerable, they might develop feelings and self-regulation.

Rodrigo Quian, a bioengineer at the University of Leicester, discussed his discovery of “Jennifer Aniston neurons,” cells that respond selectively to a person (such as Jennifer Aniston), whether someone sees a picture, a drawing, or just a name. More broadly, he spoke of “concept cells” and noted that humans are flexible and efficient thinkers—unlike many AI agents—because we can abstract from experience without being beholden to the details.

Many people discussed ethics. Walter Greenleaf, a neuroscientist at Stanford, asked if gene editing would diminish neurodiversity. David Bueno, a geneticist at the University of Barcelona, said we might tailor people’s educations based on their brains, but raised the possibility of inequality in cognitive enhancement. He also noted that wide monitoring of brain activity could invade privacy.

Amanda Pustilnik, a lawyer at the University of Maryland, said someone’s brain data might alert him when he’s about to have a manic attack, but it could also go to a data broker, and he’ll start seeing ads for online gambling. Or it could make its way to an employer. We need a NINA, or Neuroscience Information Nondiscrimination Act, she said. Pustilnik also argued that neurotechnology should promote autonomy, rather than a deterministic identification with our brains.

Others noted neuroscientific implications for identity. Ng Wai Hoe, a neurosurgeon at the National Neuroscience Institute in Singapore, asked what we should conclude about a person’s criminality based on a brain scan. And Jose Carmena, an electrical engineer at the University of California, Berkeley, said that some people with deep brain stimulators for Parkinson’s disease or epilepsy say their sense of self is different when the stimulator is on.

A few of the questions I (and others) raised at the forum: Will neurotech and AI provide the greatest benefits to people at the top or the bottom of the socioeconomic hierarchy? Will learning about the brain have an impact on how we see ourselves or free will? How can we reduce bias in medical data? What other principles of biology can be applied to make thinking machines smarter?

Our understanding of the brain is growing quickly, thanks in part to new tools for studying it. Perhaps the biggest revelation is how much more is left to learn.

Source: Psychology Today

Comments are closed.