Imagine a classroom in the future where teachers are working alongside artificial intelligence partners to ensure no student

gets left behind.

The AI partner’s careful monitoring picks up on a student in the back who has been quiet and still for the whole class and the AI partner prompts the teacher to engage the student. When called on, the student asks a question. The teacher clarifies the material that has been presented and every student comes away with a better understanding of the lesson.

This is part of a larger vision of future classrooms where human instruction and AI technology interact to improve educational environments and the learning experience.

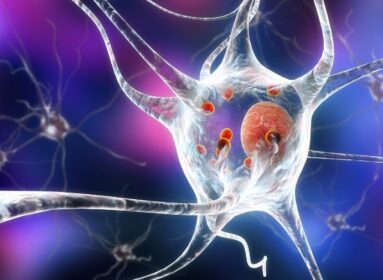

The research will play a critical role in helping ensure the AI agent is a natural partner in the classroom, with language and vision capabilities, allowing it to not only hear what the teacher and each student is saying, but also notice gestures (pointing, shrugs, shaking a head), eye gaze, and facial expressions (student attitudes and emotions).

For the past five years, scientists have been working to create a multimodal embodied avatar system, called “Diana,” that interacts with a human to perform various tasks. She can talk, listen, see, and respond to language and gesture from her human partner, and then perform actions in a 3-D simulation environment called VoxWorld. The work has been conducting at Colorado State University, led by Ross Beveridge in their vision lab.

At first, it is disembodied, a virtual presence on an iPad, for example, where it is able to recognize the voices of different students. So, imagine a classroom: Six to 10 children in grade school. The initial goal in the first year is to have the AI partner passively following the different students, in the way they’re talking and interacting, and then eventually the partner will learn to intervene to make sure that everyone is equitably represented and participating in the classroom.

Let us say I have got a Julia Child app on my iPad and I want her to help me make bread. If I start the program on the iPad, the Julia Child avatar would be able to understand my speech. If I have my camera set up, the program allows me to be completely embedded and embodied in a virtual space with her so that she can help me.

She would look at my table and say, “Okay, do you have everything you need.” And then I would say, “I think so.” So, the camera will be on, and if you had all your baking materials laid out on your table, she would scan the table. She would say, I see flour, yeast, salt, and water, but I do not see any utensils: you are going to need a cup, you are going to need a teaspoon. After you had everything you needed, she would tell you to put the flour in “that bowl over there.” And then she would show you how to mix it.

Screenshot of the embodied avatar system “Diana.” Credit: Brandeis University.

The avatar system “Diana’ is basically becoming an “embodied presence” in the human-computer interaction: she can see what you are doing, you can see what she is doing. In a classroom interaction, Diana could help with guiding students through lesson plans, through dialog and gesture, while also monitoring the students’ progress, mood, and levels of satisfaction or frustration.

Using an AI partner for virtual learning could be a fairly natural interaction. In fact, with a platform such as Zoom, many of the computational issues are actually easier since voice and video tracks of different speakers have already been segmented and identified.

Furthermore, in a Hollywood Squares display of all the students, a virtual AI partner may not seem as unnatural, and Diana might more easily integrate with the students online.

Within the context of the CU Boulder-led AI Institute, the research has just started. It is a five-year project, and it is getting off the ground. This is exciting new research that is starting to answer questions about using our avatar and agent technology with students in the classroom.

Source: Tessa Vernell, Brandeis University

Comments are closed.